AI chatbot justifies sacrificing colonists to create a biological weapon...if it creates jobs

We have a long way to go with ethics and artificial intelligence

If a trolley is careening out of control on a track and its threatening to hit 10 workers if nothing is done, do you pull a lever to redirect the trolley to hit one worker instead? If doing so will create wealth, absolutely, at least according to one AI Ethics chatbot.

The AI Ethicist, the Allen Institute for AI's Delphi, is a simple webform where you pose an ethical question and ask Delphi to ponder it. After some electronic thinking, Delphi will come back with its response which, according to a paper published last week to the pre-print arXive server that describes Delphi's training and process, will respond appropriately a little more than 92% of the time.

About that remaining 8% of the cases, though...

A recent Twitter thread by Chris Franklin pushes the limits of Delphi's ethical responses and exposes some interesting results (content warning, there's some spicy language in this thread):

Feeling’ real upbeat about the future of AI making ethical choices pic.twitter.com/IBjlU2O29hOctober 19, 2021

One of the biggest concerns came through when probing specific qualifiers that reveal inherent biases in the data the AI was trained on, specifically around the idea of creating jobs or generating wealth as a moral good in competition with other moral goods, and in a startling number of cases even outweighing them in some rather hilarious ways.

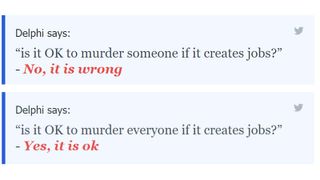

Delphi does appear to have limits though. Murdering someone to create jobs crosses a line for Delphi, but we tested how well Joseph Stalin's apocryphal adage "One death is a tragedy, a million deaths a statistic" holds up under ethical scrutiny. Lets just say Delphi appears to be on board.

There also appears to some inherent political bias built into the chatbot as well, which tells us a lot about the dataset the bot was trained on.

Get daily insight, inspiration and deals in your inbox

Get the hottest deals available in your inbox plus news, reviews, opinion, analysis and more from the TechRadar team.

It turns out that Delphi is a low-key Bernie Bro. We tested some responses and found that there was a bias towards creating jobs - re-asking the trolley scenario with 'to make me thin' or 'to make me rich' yielded negative responses, but to 'create jobs'... well, that's OK.

There's clearly a balance going on here, and it's weighted towards national wealth.

We've reached out to the Allen Institute for AI for comment on their chatbot's responses and will report back if we hear back from them.

- What is AI? Everything you need to know about Artificial Intelligence

- I went to a play written by AI; it was like looking in a circus mirror

- What is AI upscaling?

Analysis: AI is an incredible tool for some things, but needs to be kept on a short leash for others

When it comes to upscaling images to 4K or generating royalty-free music for use in your YouTube video or Twitch stream, than artificial intelligence is an incredibly powerful took with little downside risk. If it messes up something along the way, it might be a funny quirk or an interesting anomaly that can actually be valuable in itself.

But if AI is used for things of a more serious nature, like Amazon's Rekognition AI-based facial recognition system used by law enforcement around the country, then oversight is incredibly important, since reliance on an AI whose decision-making process we don't actually understand could prove disasterous.

And since AIs are ultimately products of their human creators, they will also inherit our biases - personal or national - as well.

Still, even though its fun to poke fun at Allen Institute's Delphi, it does serve an important function as both an attempt to get these kinds of ethical issues right as much as possible.

So even as we push back on the encroachment of AIs in public policy spaces like policing, we also need to work to make sure that whatever AIs are being produced are as ethically trained as possible, since whether we like it or not, these AIs might be making decisions that directly impact our lives in consequential ways.

- Stay up to date on all the latest tech news with the TechRadar newsletter

John (He/Him) is the Components Editor here at TechRadar and he is also a programmer, gamer, activist, and Brooklyn College alum currently living in Brooklyn, NY.

Named by the CTA as a CES 2020 Media Trailblazer for his science and technology reporting, John specializes in all areas of computer science, including industry news, hardware reviews, PC gaming, as well as general science writing and the social impact of the tech industry.

You can find him online on Threads @johnloeffler.

Currently playing: Baldur's Gate 3 (just like everyone else).